AI is forcing open source projects to change fundamentally how they operate.

From PGP to Vouch, decentralized trust keeps breaking the same way

In January 2026, Daniel Stenberg shut down cURL's bug bounty program. Six years, $86,000 paid out, 78 genuine vulnerabilities fixed. And then: 95% of incoming reports turned to garbage. By mid-2025, submission volume had spiked to eight times the normal rate. In six years of tracking AI-only submissions, not a single one had discovered a real vulnerability. Zero.

A week later, tldraw announced it would auto-close all external pull requests. Steve Ruiz described PRs that "claimed to solve a problem we didn't have or fix a bug that didn't exist." Excalidraw saw twice as many PRs in Q4 2025 as in Q3. Mitchell Hashimoto responded by building Vouch, a trust management system for Ghostty, and releasing it as open source.

This is a symptom of a structural break in how open source contribution works.

AI: 1.7x more issues than human-written ones

The core problem is economic, not technical.

A CodeRabbit report from December 2025 found that AI-generated pull requests contain 1.7x more issues than human-written ones. Logic errors appear 75% more often. Readability problems show up at triple the rate. I/O performance issues occur nearly eight times as frequently. (CodeRabbit sells AI code review tools, so it has a commercial interest in these findings). Xavier Portilla Edo, who works on the Genkit core team, estimates that only 1 in 10 AI-created PRs meets the standards required to open them.

But most of these PRs never merge. The damage is the review time they consume. A maintainer can spot slop in seconds, but writing a polite rejection, engaging with follow-up questions, and handling the social overhead of saying "no" costs orders of magnitude more time than the contributor spent generating the PR.

This asymmetry existed before AI. GitHub's design (contribution graphs, green squares, low-friction fork-and-PR workflow) optimized for making contributions easy. That worked when the bottleneck was getting people to contribute at all. Now the bottleneck is the opposite: too many contributions, not enough qualified reviewers. LLMs didn't create this dynamic. They scaled it past the breaking point.

Consider what AI-assisted contribution looks like when done well. In September 2025, a security researcher named Joshua Rogers submitted roughly 50 real bugs to cURL using AI-assisted scanning tools. Stenberg called them "actually, truly awesome findings." Rogers used AI as a research tool, tested multiple scanners, evaluated their output, and filtered results using his own expertise before submitting. The difference between Rogers and the slop: human judgment in the loop, applied with domain knowledge.

Enter Vouch

Vouch is Mitchell Hashimoto's answer. It's a contributor trust management system built around a simple idea: unvouched users can't submit pull requests.

Three states:

vouched

denounced

unknown

The more ambitious feature is the web of trust: projects can read each other's vouch lists, so a developer vouched by Project A is automatically recognized by Project B. Hashimoto has already deployed it on Ghostty, where maintainers vouch new contributors by commenting "!vouch" on an issue.

"Who and how someone is vouched or denounced is up to the project," Hashimoto wrote. "I'm not the value police for the world." Each project owns its own list. This simplicity makes Vouch easy to adopt. It also makes it easy to trust too much.

We've tried this before

Decentralized trust systems have a three-decade track record, and it isn't encouraging.

Phil Zimmermann introduced the PGP Web of Trust in 1992. The idea: instead of a central certificate authority, individuals vouch for each other's identities, forming a graph of endorsements. In practice, the WoT failed for social reasons, not technical ones. Key signing was awkward, nobody revoked expired trust, and the graph never grew dense enough to be useful outside small, motivated communities.

An important distinction: PGP was solving identity verification (is this key really Alice's?). Vouch is solving contribution quality filtering (should we spend review time on this person?). Different trust problems, different incentive structures. But the failure modes overlap more than they diverge.

Advogato tried transitive trust for free software developers: if you trust Alice, and Alice trusts Bob, you partially trust Bob. Transitive trust weakens with every hop, and the system depended entirely on personal contacts, which meant it added nothing beyond what tight-knit teams already had.

eBay's feedback system, often cited as a reputation success story, actually depends on credit card chargebacks and real-world legal enforcement. The reputation score alone doesn't prevent fraud; it's a signal layered on top of hard institutional backing. Open source has no equivalent. There are no chargebacks on “wasted review time” (!), no legal enforcement behind a denounce list. Reputation is all there is, and reputation alone has never been enough.

There's a counter-example worth acknowledging: the Linux kernel. Its Signed-off-by chain and subsystem maintainer hierarchy function as a vouch system, and they've worked at massive scale for over 20 years. But the kernel model succeeds because of specific conditions: professional maintainers with real career consequences for bad vouches, a clear hierarchical authority structure, and a community where vouching carries genuine risk to the voucher's standing.

The lesson: reputation systems work in small, high-trust communities where participants know each other, or in hierarchies with real consequences. It does not work at scale:

Gaming is cheap. Attackers create sockpuppet accounts, build fake reputations through trivial interactions, then exploit trust for one big payoff.

Trust is contextual. Someone trusted for JavaScript contributions isn't necessarily trustworthy for security reviews. Collapsing trust into a single score loses information that matters.

Nobody maintains the graph. Vouchers don't revoke trust when circumstances change. Stale endorsements accumulate. The graph becomes noise.

"Who rates the raters?" has no good answer. Every layer of meta-trust introduces the same problems it was supposed to solve.

Vouch's local-first design (per-project lists rather than a global score) avoids the worst centralization failure modes. But the web-of-trust feature reintroduces exactly the cross-project trust transfer that collapsed PGP and email blacklists. When Project B imports Project A's vouch list, a lax voucher in Project A becomes a vulnerability in Project B. One compromised hub contaminates the entire downstream graph.

The inclusion problem

Maintainers need to filter. The question is whether they filter:

on the right axis (code quality, project alignment)

on the wrong one (social connections, wealth, existing reputation).

The historical promise of open source was meritocratic access: submit a good patch, and it doesn't matter who you are, where you live, or who you know. That promise was always imperfect (the Linux kernel, Apache, and Debian all had gatekeeping long before AI), but it was real enough that outsiders could prove themselves through public work. Vouch systems can replace "judge the patch" with "judge the person's network".

The cold start problem. A competent developer with no existing connections to a project has no path to getting vouched. The documented bootstrapping path ("open a discussion, demonstrate understanding, get noticed") requires social skills and persistence that have nothing to do with code quality. Introverts, non-native English speakers, and people from different culture can be disadvantaged.

Denounce lists carry legal and social risk. The Dutch tax authority was fined by the Autoriteit Persoonsgegevens for maintaining a fraud blacklist without proper legal basis. The legal analogy to open-source denounce lists isn't exact (government blacklists of citizens differ from project-maintained lists of GitHub usernames), but GDPR's reach into personal data processing is broad, and defamation liability applies everywhere.

Credential creep. Once vouch lists exist across enough projects, they'll become hiring signals and social capital. Participation shifts from skill-based to network-based.

Angie Jones, who leads the Goose project, took a different approach: instead of blocking external PRs, she created a `HOWTOAI.md` file guiding contributors on responsible AI use. It filters on behavior, not identity. Whether it scales is an open question, but the philosophy is sound: tell people how to contribute well rather than deciding in advance who's allowed to try.

What would actually work?

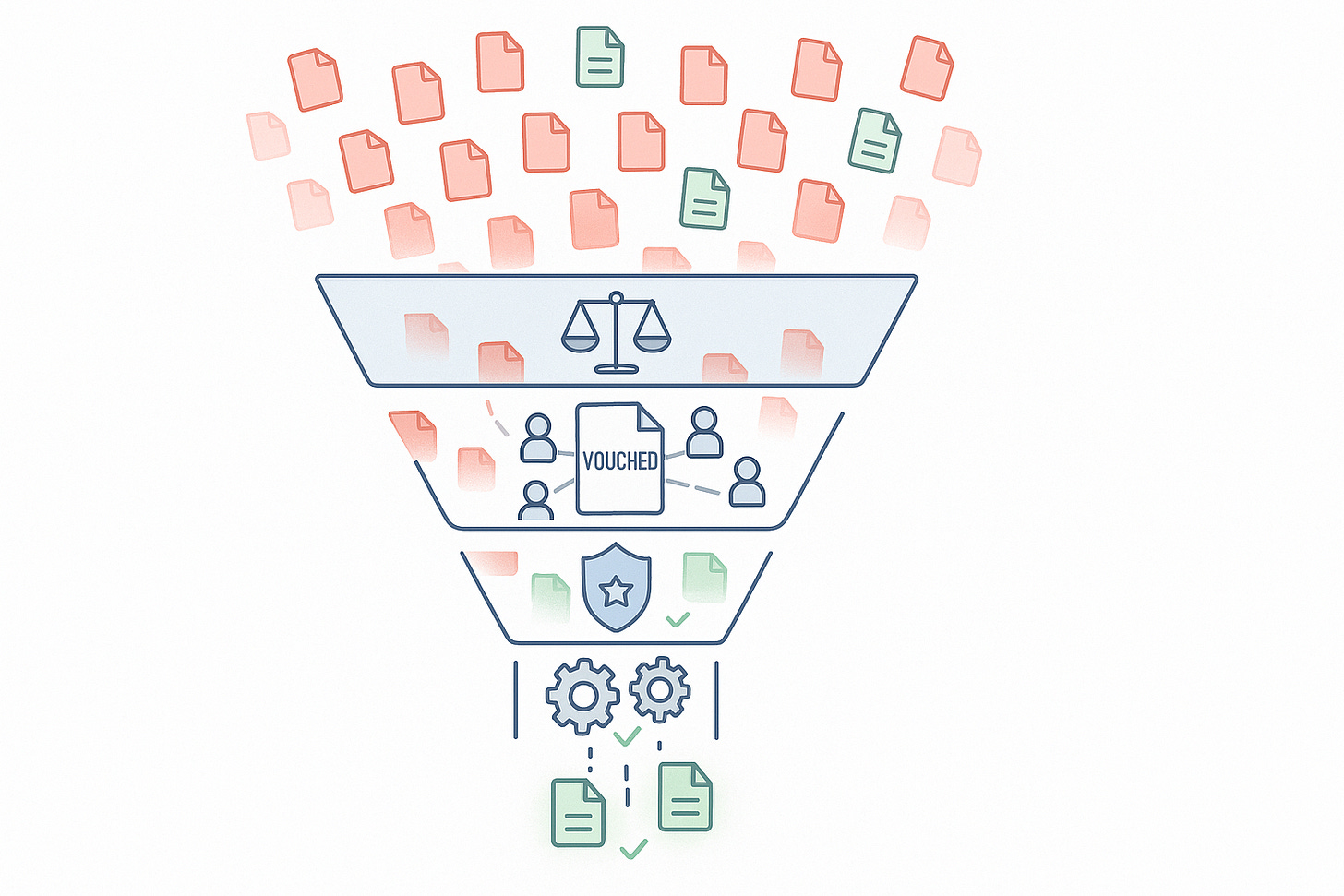

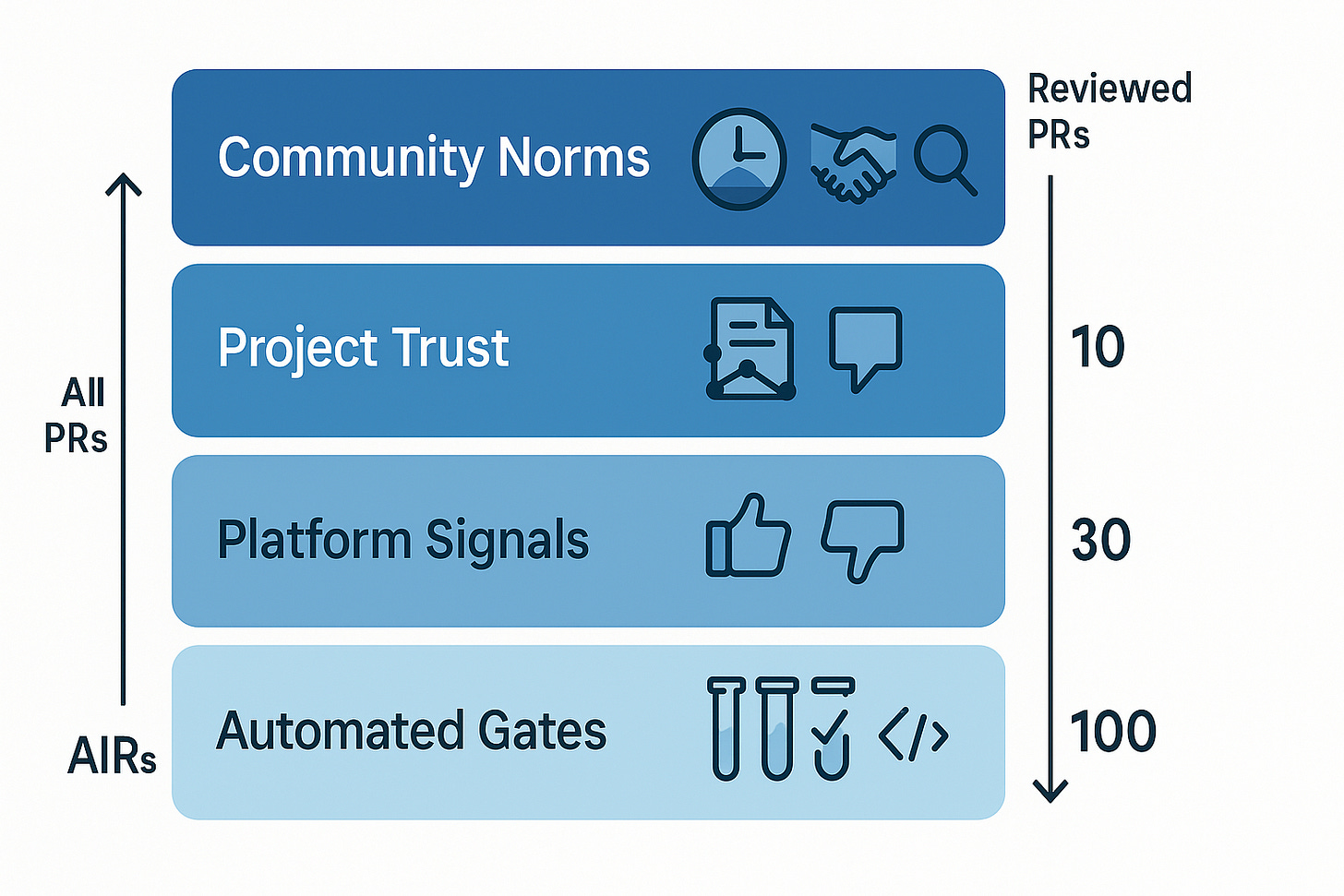

Vouch alone fails. A real solution requires layered defense, and the first layer isn't social at all.

Layer 1: Automated quality gates.

Before any human sees a PR, it should pass automated checks: test suite, linting, static analysis, a mandatory "describe what you changed and why" template. This is boring, unsexy infrastructure, but it works. Projects that enforce comprehensive CI gates report significant drops in spam PRs because LLM-generated code can't pass test suites and the humans behind them don't bother filling in structured templates. CLA/DCO signing requirements add another lightweight barrier that filters casual spam while imposing minimal burden on serious contributors.

Automated gates should be the first line. Vouch-style systems are the second layer for what gets past.

Layer 2: Platform-level signals.

GitHub is already exploring configurable PR permissions, the ability to delete PRs, and AI-assisted triage. But the deeper fix is negative reputation signals. When a maintainer marks a PR as low-quality or spam, that signal should follow the contributor (project-scoped at first, aggregated across projects with consent).

This is the highest-leverage intervention because it addresses the problem at the layer that created it. GitHub made contributing cheap; GitHub should make contributing cheaply carry a cost.

There are risks here too. Negative reputation signals can chill legitimate contributions, enable retaliation, and create gaming incentives in the other direction (maintainers weaponizing spam flags against contributors they personally dislike).

Layer 3: Project-level trust with explicit on-ramps.

Vouch's per-project file format is the right starting point, but projects need to design explicit bootstrapping paths: staging repos, mentorship channels, small initial tasks that earn trust without requiring social capital.

Vouching must carry risk. If you vouch for someone who then submits spam, your vouching power should be suspended or reduced. Without skin in the game, vouch lists degrade into rubber stamps, exactly like PGP key signing. The Linux kernel's Signed-off-by chain works because a bad vouch damages the voucher's reputation with the subsystem maintainer. A flat file with no consequences won't replicate that dynamic.

Layer 4: Behavioral filtering over identity filtering.

The goal should be filtering on artifact quality and contributor behavior (test coverage, issue discussion, follow-through on reviews), not on identity or social standing:

Rich, purpose-bound attestations ("I've reviewed 3 PRs from this person and they were well-tested") rather than binary vouch/denounce

Sunset mechanisms: trust that expires unless renewed, preventing stale endorsement buildup

Appeal paths for denouncement, with mandatory reasons

Explicit scope: a code quality denouncement is different from a conduct denouncement, and mixing them corrupts both

The decade we're designing right now

The scarce resource in open source has never been code. It's review attention. AI made that imbalance catastrophic. Every proposed solution (vouch lists, paywalls, platform restrictions) is an attempt to rebalance the equation.

If the community just builds walls, open source becomes another credentialed club: access by network, not by skill.

Vouch is a reasonable first move for projects drowning in slop. But treating it as the answer, rather than one layer in a multi-level response, repeats the mistakes of every decentralized trust system that came before. People are complicated, trust is contextual, and elegant systems break when they meet the mess of human social dynamics.