Recursion Prompting: from 'make it blue' to building a color theory system

How AI tools accelerate the climb from tasks to systems

Recursion Prompting: from "make it blue" to building a color theory system

You wanted a blue button.

You opened Claude, typed "Change the button color to blue". Simple. Direct. Done.

Then you paused. Is blue the right color? You typed: "What's the best color for a call-to-action button?"

The model gave you principles. Contrast ratios. Color psychology. You learned something.

Then: "Help me create a framework for choosing button colors."

Then: "How do I evaluate whether a design decision framework is good?"

Then: "Build me a system that generates context-appropriate color recommendations based on user research and brand guidelines."

You started with a blue button. You ended with a color theory system. The button is still not blue.

This pattern has several names

Programmers have called variations of it "yak shaving" and "abstraction astronauting" for decades. Joel Spolsky wrote about it in 2001.

What's new is how AI accelerates the climb. When generating frameworks costs nothing, you generate frameworks. When asking meta-questions takes seconds, you ask meta-questions. The friction that used to slow abstraction is gone.

I'll call it Recursion Prompting: the tendency to climb from task execution to decision-making to meta-decision-making until you're building systems instead of doing work.

Researchers have formalized adjacent ideas. Zhang et al. (2024) described Meta Prompting as prompting techniques focused on structural patterns rather than content. The LADDER framework showed that recursive problem decomposition improved Llama 3.2's math accuracy from 1% to 82%. These are prompting techniques for better model outputs.

What practitioners experience is different: a behavioral pattern where each rung feels productive while the original task drifts further away.

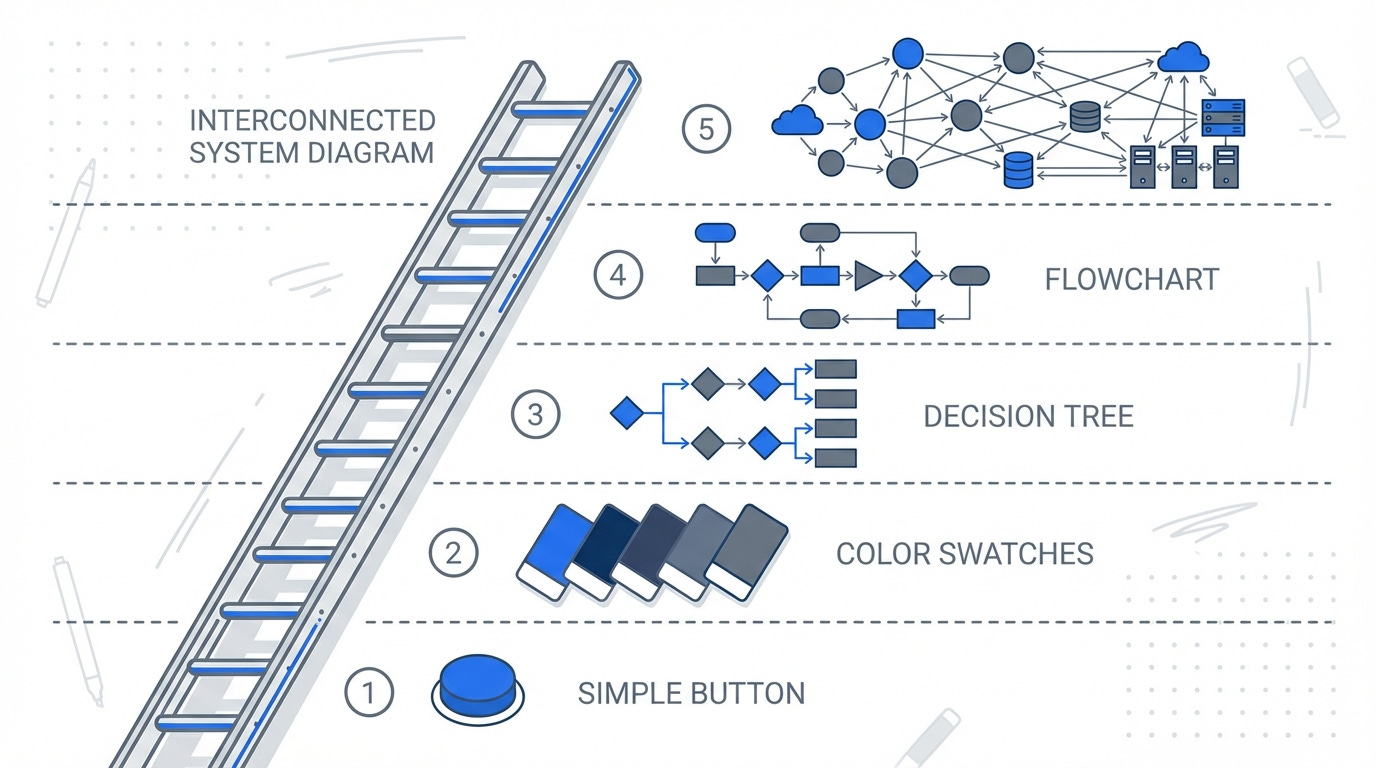

Five rungs

Rung 1: From action to decision

You stop asking the AI to do things. You start asking it to help you choose.

Level 0: "Make the button blue"

Level 1: "What color should this button be?"The AI becomes a collaborator rather than a typist.

Rung 2: From implicit to explicit reasoning

Your reasoning, which used to happen silently in your head, becomes an object the AI can examine.

Level 1: "What color should this button be?"

Level 2: "What factors should I consider when choosing button colors?"You're not asking for an answer. You're asking for a method.

Rung 3: From specific to abstract

You strip away the context. The domain disappears.

Level 2: "What factors for button colors?"

Level 3: "What's a good framework for design decisions?"Blue button becomes "color". Color becomes "design decision". Design decision becomes "decision framework". The original problem barely matters now.

Rung 4: From chain to loop

The output becomes the input. You ask the AI to evaluate its own responses.

Level 3: "What's a good framework for design decisions?"

Level 4: "Critique this framework. What's missing? Improve it."The Self-Refine technique formalizes this: prompt, feedback, refinement, repeat. You're still in the loop, guiding each iteration.

Rung 5: From iteration to automation

You remove yourself from the loop. The system runs without you.

Level 4: "Critique and improve this framework"

Level 5: "Build a system that generates, evaluates, and improves design frameworks automatically"You're no longer solving problems. You're building problem-solving infrastructure. The blue button has been abstracted out of existence.

Why the ladder exists

It works for certain problems. Recursive decomposition genuinely improves model performance on complex tasks. Andrej Karpathy described context engineering as "the delicate art of filling the context window with just the right information." Structured requests produce better outputs.

It feels productive. Each rung feels like progress. You're thinking harder, being systematic. Ethan Mollick observed that iteration with AI teaches you how to use AI. Climbing the ladder is a form of iteration.

AI removes the friction. Before LLMs, building a framework meant hours of work. Now it takes seconds. The cost of abstraction collapsed.

When climbing is correct

The ladder isn't always procrastination. Sometimes it's exactly right.

Recurring decisions. If you'll face this choice a hundred times, build the framework. A design system team spending months on color tokens saves hundreds of hours per quarter.

Capability building. A junior designer asking "what factors should I consider?" is learning, not procrastinating. The ladder is education.

Team knowledge. Frameworks capture decisions. When you leave, the framework stays. Sometimes the system IS the deliverable.

The 3x rule: If you'll face this decision type more than 3 times, climb one rung. More than 10 times, climb two. If it's a one-off, stay at Rung 1.

When climbing is procrastination

Simon Willison called LLMs "chainsaws disguised as kitchen knives". The power tempts you to cut down forests when you need to slice bread.

Signs you're climbing to avoid work:

You're refining a framework you'll use once

The original task would take less time than building the system

You've spent more time on meta-decisions than the decision itself

You keep finding one more rung to climb

The ladder has no top. You can always add another layer of abstraction.

Practical moves

Before your next prompt, ask: Am I on Rung 1 or Rung 4? No wrong answer. Just know where you are.

Apply the 3x rule. One-off decision? Stay concrete. Recurring pattern? Climb deliberately.

Use the loops if you climb to Rung 4. Ask the AI to critique and improve. The feedback loop is where value accumulates, but only if you close it.

Set a descent trigger. Before climbing, decide what will make you descend. "I'll spend 10 minutes on the framework, then pick a color."

The pattern exists. We all do it. Now it has a name.

The question isn't whether to climb. It's whether you're climbing on purpose.

Now make the button blue.